The Death of the Dashboard: Why Thin SaaS Dies in the Age of Agentic Execution

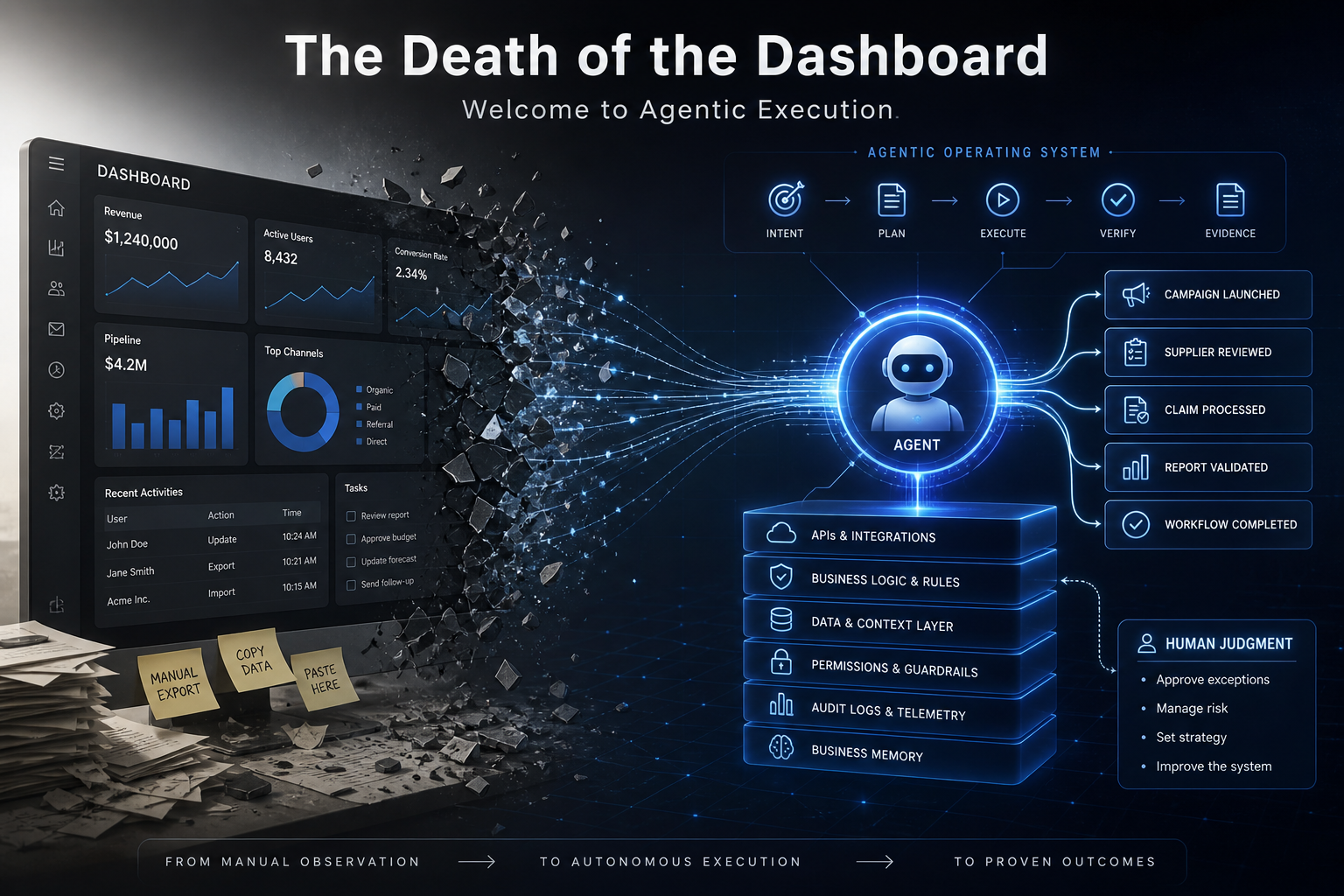

Dashboards once helped humans act faster. In an AI-native operating model, the real advantage is no longer interface polish—it is agent-legible infrastructure, outcome telemetry, and intent-to-artifact execution.

The dashboard used to be the control room.

That made sense when software needed a human operator in the middle of every decision. The system gathered data. The dashboard displayed it. A manager interpreted the numbers. Someone clicked the button. Another person copied the output into another tool.

That operating model is collapsing.

Not because dashboards became ugly. Not because users stopped needing clarity. Because the primary executor changed.

In an AI-native business, the most important consumer of your systems is no longer only the human eye. It is the agent that reads state, calls tools, follows policy, executes a workflow, and returns evidence. When that becomes possible, the dashboard stops being the center of the product. It becomes one surface among many. Sometimes it becomes unnecessary.

This is the real death of the dashboard: not the disappearance of visual interfaces, but the end of the dashboard as the default operating layer of digital work.

The new question is not:

“Can the user understand the interface?”

The better question is:

“Can the system understand the intent, execute the workflow, prove the result, and surface only the decisions that require human judgment?”

That shift kills thin SaaS. It weakens prompt wrappers. It forces every software company to move from interface ownership to workflow ownership.

Key Highlights

- Intent-to-artifact latency becomes the strategic metric. The business should measure how long it takes for a decision to become a verified artifact: code shipped, campaign launched, claim processed, supplier reviewed, report validated, or workflow completed.

- Dashboards are no longer the operating layer. They remain useful for inspection, governance, and exceptions. They fail when they become a manual bridge between systems.

- Thin SaaS loses defensibility. A tool that only stores data, visualizes records, or wraps a prompt cannot justify premium pricing when AI-native systems can produce the same output inside a broader workflow.

- Agent-legible infrastructure becomes the moat. APIs, schemas, permissions, event logs, evaluations, and business memory matter more than decorative UI.

- Senior talent moves from task execution to contextual stewardship. Operators do less clicking. They define intent, approve exceptions, manage risk, and improve the system’s judgment surface.

The Research Gap: AI Adoption Is High. Enterprise Value Is Still Uneven.

The market already moved past “Will companies use AI?” Stanford’s 2025 AI Index reported that 78% of organizations used AI in 2024, up from 55% the year before, while global private investment in generative AI reached $33.9 billion. (Stanford HAI) McKinsey’s 2025 global survey pushed the adoption picture further: 88% of respondents said their organizations regularly use AI in at least one business function. (McKinsey & Company)

But adoption does not equal operational transformation.

McKinsey also found that only about one-third of organizations had begun scaling AI at the enterprise level, while just 39% reported EBIT impact at the enterprise level. The same survey showed that 23% were scaling agentic AI somewhere in the enterprise and another 39% were experimenting with agents. (McKinsey & Company)

That gap matters.

It means the constraint is not access to models. The constraint is operating architecture. Most companies can generate text, summarize data, draft plans, and prototype interfaces. Fewer can connect those capabilities to durable workflows, permissioned systems, business rules, and measurable outcomes.

The winners will not be the companies with the most AI features. They will be the companies that redesign the work around AI execution.

McKinsey’s data points in that direction: high performers are more likely to redesign workflows, define when model outputs need human validation, embed AI into business processes, and track KPIs for AI solutions. (McKinsey & Company) That is not a tooling story. It is a systems story.

The Dashboard Was Built for the Human Bottleneck

The classic SaaS dashboard solved a specific historical problem.

Business systems created more data than operators could manually inspect. Dashboards compressed that data into charts, tables, filters, and alerts. They gave humans a faster way to perceive the state of the business.

That was valuable when the workflow still depended on a person to decide and act.

A sales dashboard showed pipeline risk. A sales manager changed the forecast. A finance dashboard showed cash variance. A controller investigated. A marketing dashboard showed campaign performance. A growth operator adjusted spend. A support dashboard showed backlog pressure. A team lead reassigned tickets.

The dashboard did not execute the work. It prepared the human to execute the work.

That distinction now matters.

When an agent can monitor state, evaluate thresholds, retrieve context, call tools, draft changes, trigger workflows, and request approval, the dashboard no longer deserves to be the center of the system. The center becomes the execution loop.

A dashboard tells a person what happened.

An operating system decides what must happen next, starts the work, records the evidence, and escalates only when judgment matters.

The Relocation of Resistance

Every serious automation wave moves the bottleneck.

Spreadsheets moved resistance from calculation to data quality. Cloud software moved resistance from installation to process design. APIs moved resistance from manual transfer to integration governance. AI moves resistance from execution speed to clarity of intent.

When execution gets cheaper, vague strategy becomes expensive.

This is where many organizations break. They want autonomous execution, but they still describe work in ambiguous language:

- “Improve retention.”

- “Make the onboarding better.”

- “Find growth opportunities.”

- “Clean up the pipeline.”

- “Optimize operations.”

- “Prepare an investor-grade review.”

A senior operator can infer the missing context. An AI agent should not be forced to guess it.

If the intent lacks constraints, definitions, approval rules, available tools, data boundaries, and success criteria, the agent will either ask too many questions, hallucinate confidence, or execute the wrong version of the task.

That is the new enterprise failure mode.

The problem is no longer “our people are too slow.”

The problem is “our systems cannot translate business intent into safe execution.”

Thin SaaS Is a Symptom of the Old World

Thin SaaS has three common forms:

- Database-with-a-dashboard: The product stores records and gives users a cleaner way to browse them.

- Prompt-wrapper software: The product sends user inputs to a model and returns a polished output.

- Workflow-adjacent tools: The product generates a memo, report, plan, or analysis, but leaves execution to the user.

These products worked when digital capability itself was scarce.

They struggle when AI becomes infrastructure.

If a founder can generate a strategy memo inside a native model interface, “strategy generation” is not a product. It is a feature. If a marketer can generate campaign concepts, ad copy, and landing page variations with a general-purpose model, the value cannot sit in drafting. If an operator can ask an AI assistant to summarize records, the value cannot sit in a static reporting screen.

The product must own a harder part of the workflow.

It must connect strategy to execution. It must carry memory across decisions. It must enforce business rules. It must measure value events. It must integrate with the systems where work actually happens. It must reduce the need for human glue.

The SaaS spending data reinforces this pressure. BetterCloud’s 2025 State of SaaS report found that organizations used an average of 106 SaaS tools, down from 112, indicating consolidation. (BetterCloud) Zylo reported that average SaaS spend reached $4,830 per employee, up 21.9% year over year, while organizations wasted an average of $21 million annually on unused SaaS licenses. (Zylo) Tropic’s software buying data showed that AI-native spend grew 94% year over year in mid-market and enterprise companies, while primarily SaaS spend grew only 8%; among SMB and growth companies, primarily SaaS spend declined 8%. (tropicapp.io)

The market is not rejecting software. It is rejecting software that does not own the outcome.

The New Unit of Value: From Seat to System

Legacy SaaS pricing assumes the user is the unit of value.

More users means more seats. More seats means more revenue. More logins means more activity. More activity means more perceived adoption.

That logic weakens when agents do work that used to require multiple people.

If a system executes ten hours of operational work without ten human users logging in, seat count no longer reflects value. If the system reviews documents, updates CRM stages, triggers compliance workflows, and prepares executive summaries, the value sits in completed work, not user attendance.

This requires a measurement change.

Do not optimize for monthly active users as the primary signal. Measure value events:

- A qualified lead routed to the correct owner.

- A regulatory filing assembled and validated.

- A churn-risk account reviewed with recommended actions.

- A supplier exception detected, explained, and escalated.

- A campaign launched with tracking, budget rules, and rollback logic.

- A venture review completed with evidence, assumptions, and next actions.

The system should answer one question:

“What business result did we complete, and what evidence proves it?”

This is why outcome-based telemetry becomes a core product capability. Without it, AI features become theater. With it, the product can prove execution.

Agent-Legible Infrastructure: The Real Moat

Agentic systems do not need prettier dashboards first. They need legible infrastructure.

OpenAI’s Agents SDK documentation defines agents as applications that plan, call tools, collaborate across specialists, and keep enough state to complete multi-step work. It also makes clear that serious agent applications require ownership over orchestration, tool execution, approvals, and state. (OpenAI Developers) Anthropic’s Model Context Protocol was introduced as an open standard for secure, two-way connections between data sources and AI-powered tools. (anthropic.com) Google’s Agent2Agent protocol was launched to allow agents to communicate, securely exchange information, and coordinate actions across enterprise platforms. (Google Developers Blog)

These standards point to the same structural truth: the next layer of software must be machine-readable, tool-accessible, permission-aware, and stateful.

The OpenAPI Specification already frames this clearly for APIs: a standard interface description allows both humans and computers to discover and understand service capabilities without source code, extra documentation, or traffic inspection. (OpenAPI Initiative Publications) Structured outputs push the same discipline into model responses by forcing outputs to conform to a JSON Schema instead of relying on loose text. (OpenAI Developers)

This is the architecture of agent legibility.

It includes:

- Typed APIs that agents can call safely.

- Clear schemas that define entities, relationships, states, and allowed transitions.

- Permission layers that separate suggestion, preparation, approval, and execution.

- Event logs that record what happened, when it happened, who or what initiated it, and why.

- Idempotent actions that prevent repeated agent calls from creating duplicate damage.

- Evaluation suites that test output quality before deployment.

- Human review gates at high-risk nodes.

- Business memory that preserves decisions, constraints, operating principles, and historical context.

A dashboard can sit on top of this. It cannot replace it.

Disposable Pixels: The Interface Becomes Temporary

“Disposable pixels” does not mean bad design.

It means the interface should match the task, not trap the business inside a permanent screen.

In the old model, teams designed one dashboard for many users and many situations. That forced complexity into the interface. Filters multiplied. Tables grew. Sidebars expanded. Users carried the burden of navigation.

In the AI-native model, the system already knows the task context. It knows the user role, workflow state, risk level, available actions, missing information, and required decision. The UI can become smaller, sharper, and more temporary.

The interface becomes a judgment surface.

For example:

- A CFO does not need a full finance dashboard when the system only needs approval for a cash-flow exception.

- A growth lead does not need twenty campaign charts when the system needs a decision on whether to reallocate budget.

- A compliance officer does not need to browse every document when the system can surface the failed checks, the source evidence, and the required sign-off.

- A founder does not need another strategy report when the system can show the next three constraints blocking execution.

This is where modular frontend systems matter. Next.js, Shadcn, typed components, and design tokens become a vocabulary for generating focused interfaces around live workflow states. The product does not need to display everything. It needs to display the next meaningful decision.

A static dashboard says, “Here is the system.”

An ephemeral interface says, “Here is the decision that needs your judgment now.”

The Tasawom Solution: Architect the Substrate of Intent

Tasawom’s position is not to build decorative software around vague requirements. The operating identity is strategic execution-layer engineering: turning business problems into production-grade systems, not prototypes, decks, or activity loops.

That matters because AI-native transformation is not a prompt problem. It is a substrate problem.

At Tasawom, the shift is simple:

We stop asking, “What dashboard should the client see?”

We ask, “What intent must the system understand, what workflow must it execute, what proof must it produce, and where must a human retain control?”

That produces three engineering priorities.

1. Schema-First Sovereignty

If your business process only exists in human explanation, it cannot scale through agents.

Schema-first sovereignty means the core business logic lives in structured, machine-readable form:

- customer states

- lead stages

- asset types

- approval rules

- exception categories

- risk levels

- service-level agreements

- required artifacts

- allowed actions

- ownership boundaries

The goal is not documentation for documentation’s sake. The goal is operational clarity.

When a system understands its own objects and transitions, agents can act without inventing process logic. Developers can build faster. Leaders can audit what happened. Operators can trust the workflow.

2. Intent Engineering

Traditional project management converts goals into tasks.

Intent engineering converts goals into executable constraints.

A useful intent includes:

- the business objective

- the scope boundary

- the available data

- the tools the system may use

- the actions it may prepare

- the actions it may execute

- the approval thresholds

- the success criteria

- the rollback condition

- the evidence required for completion

This is where senior operators become contextual stewards. They do not spend their best hours moving data between tools. They refine the command surface of the business.

They make the system easier to instruct and harder to misinterpret.

3. Ephemeral UI Generation

The interface should not become the bottleneck.

Tasawom’s frontend approach treats UI as a precise decision layer over a stronger execution substrate. The system assembles focused screens from modular primitives only when a human needs to inspect, approve, compare, or override.

That reduces interface clutter and makes the product more operationally honest.

The product does not pretend that visual polish equals automation. It uses visual structure to compress complexity at the exact point of human judgment.

Pro-Tip: If your workflow requires a person to manually copy data from one system into another, you did not automate the process. You moved the manual work into a browser. Fix the substrate before you redesign the dashboard.

The Operator’s Protocol: Four Logic Patches

To survive the death of the dashboard, software teams need structural patches, not cosmetic updates.

Patch 01: Build the Deterministic Execution Layer

Stop selling copilots that only advise.

A serious system should complete deterministic work once intent, permissions, and data are clear.

If the system identifies a market gap, it should not only write a memo. It should prepare the landing page brief, create the campaign structure, generate the CRM fields, define the tracking events, and route the approval request.

If the system detects a support escalation, it should not only summarize the complaint. It should classify the issue, retrieve account context, draft the resolution, update the ticket state, and escalate to the right owner if the risk threshold is crossed.

AI should not remain trapped at the thinking layer. It should move into the leverage layer.

Patch 02: Replace Usage Metrics with Outcome Telemetry

Usage is not value.

A user can log in every day and still produce no business result. An agent can run once and complete work that previously required a week of coordination.

Measure completed outcomes.

Build telemetry around value events, artifact quality, approval speed, exception frequency, rollback rate, and business impact. Attach evidence to every completed workflow. Make the system prove its contribution.

This also changes commercial logic. Seat-based pricing may still work for some products, but systems that deliver measurable outputs should explore unit-based, usage-based, or outcome-aligned pricing. The pricing model should reflect the work completed, not the number of people staring at screens.

Patch 03: Harden Vertical Logic

Horizontal AI will continue to compress margins.

The defensible layer sits inside the physics of the industry.

A logistics system must understand routing constraints, customs requirements, fleet availability, exception windows, and supplier reliability. A healthcare system must understand clinical workflows, privacy rules, authorization states, and documentation requirements. A venture operating system must understand portfolio stages, funding milestones, evidence quality, market assumptions, and review cadence. A manufacturing system must understand lead times, tolerances, materials, downtime, and quality gates.

Generic AI can draft language about these domains. It cannot automatically own the operating logic unless the product encodes it.

Vertical logic is the moat.

Patch 04: Create Shared Business Memory

Fragmented SaaS creates fragmented memory.

One tool stores tasks. Another stores documents. Another stores customer history. Another stores metrics. Another stores decisions. The human becomes the integration layer.

An AI-native operating system needs a persistent state of the business.

That memory should include:

- decisions made

- assumptions tested

- assets produced

- workflows completed

- exceptions encountered

- rules updated

- customer context

- performance history

- strategic priorities

- reusable playbooks

Shared memory raises switching costs because the product no longer stores isolated data. It stores operational continuity.

Direct Implementation: What To Do Now

1. Audit Your Top Three Workflows for Agent Readability

Choose three workflows that matter to revenue, cost, risk, or delivery.

For each workflow, ask:

- Can an agent understand the objective without a meeting?

- Can it access the required data through APIs or structured sources?

- Can it identify the current state of the work?

- Can it call the tools needed to progress the workflow?

- Can it distinguish low-risk actions from approval-required actions?

- Can it produce evidence of completion?

- Can a human review the decision trail?

If the answer is no, you have found strategic technical debt.

2. Kill the Wrapper

List every feature that only does one of these:

- sends a prompt to a model

- generates a generic document

- summarizes data without execution

- displays information without next action

- requires manual copy-paste to create value

Then decide:

- delete it

- move it to a free tier

- embed it inside a broader workflow

- connect it to an execution layer

Do not defend weak features because they look impressive in a demo. In 2026, demos are cheap. Operational trust is expensive.

3. Define Intent-to-Artifact Latency

Create a new metric:

Intent-to-artifact latency = the time between a strategic decision and a verified output.

Track it across different workflows:

- idea to landing page

- customer issue to resolution

- insight to campaign change

- supplier risk to mitigation action

- board request to evidence-backed report

- product decision to shipped feature

Then reduce that latency without removing human control where it matters.

4. Build Approval Boundaries Before Autonomy

Do not give an agent full autonomy because the demo worked once.

Define action classes:

- Suggest: The agent may recommend.

- Prepare: The agent may draft or configure.

- Execute: The agent may complete low-risk actions.

- Escalate: The agent must request approval.

- Block: The agent must stop.

This converts vague safety concerns into operational policy.

5. Move Senior Talent Into Contextual Stewardship

Your best operators should not spend their time reconciling spreadsheets, copying records, or formatting reports.

Move them into higher-leverage work:

- defining success criteria

- improving schemas

- validating outputs

- managing exceptions

- tuning workflow rules

- reviewing evidence

- updating business memory

- deciding where autonomy should expand or contract

The organization does not remove human judgment. It protects it from low-value labour.

The Strategic Verdict

The dashboard is not dead because visual interfaces have no value.

It is dead because manual observation is no longer the highest-value layer of software.

The next generation of software will not win by giving users more screens. It will win by taking ownership of the workflow, understanding business intent, executing deterministic tasks, preserving memory, and surfacing human judgment only where it changes the outcome.

Thin SaaS loses because it sells visibility without responsibility.

Prompt wrappers lose because they sell output without workflow ownership.

Traditional dashboards lose because they show the state of work without moving the work forward.

The future belongs to systems that operate.

Not systems that display.

Not systems that decorate.

Systems that understand the business, execute the loop, and prove the result.

Ready to build beyond the dashboard?

Stop optimizing for the human hand. Start architecting for the autonomous operating layer.

Start a Strategic Conversation with Tasawom to turn fragmented workflows into production-grade systems—or Explore our Featured Projects to see how execution-layer engineering moves from strategy to shipped software.